Harshad Suryawanshi • 2024-02-02

RAGArch:构建无代码 RAG Pipeline 配置和一键生成 RAG 代码工具,由 LlamaIndex 提供支持

释放 AI 的力量应该像使用您喜爱的应用一样直观。这就是 RAGArch 背后的理念,这是我的最新力作,旨在揭开和简化设置检索增强生成 (RAG) Pipeline 的过程。这个工具诞生于一个简单的愿景:提供一个直接、无代码的平台,赋能经验丰富的开发者和对 AI 世界充满好奇的探索者,让他们自信、轻松地构建、测试和实现 RAG Pipeline。

特性

RAGArch 利用 LlamaIndex 强大的 LLM 编排能力,提供无缝体验和对 RAG Pipeline 的精细控制。

- 直观的界面:RAGArch 用户友好的界面由 Streamlit 构建,让您可以交互式地测试不同的 RAG Pipeline 组件。

- 自定义配置:该应用提供了多种选项,用于配置语言模型、嵌入模型、节点解析器、响应合成方法和向量存储,以满足您项目的需求。

- 实时测试:使用您自己的数据即时测试您的 RAG Pipeline,并查看不同配置如何影响结果。

- 一键生成代码:一旦您对配置满意,该应用可以生成您自定义 RAG Pipeline 的 Python 代码,可直接集成到您的应用中。

工具和技术

RAGArch 的创建是通过集成各种强大的工具和技术实现的

- 用户界面:Streamlit

- 托管:Hugging Face Spaces

- LLM:OpenAI GPT 3.5 和 4,Cohere API,Gemini Pro

- LLM 编排:Llamaindex

- 嵌入模型:“BAAI/bge-small-en-v1.5”, “WhereIsAI/UAE-Large-V1”, “BAAI/bge-large-en-v1.5”, “khoa-klaytn/bge-small-en-v1.5-angle”, “BAAI/bge-base-en-v1.5”, “llmrails/ember-v1”, “jamesgpt1/sf_model_e5”, “thenlper/gte-large”, “infgrad/stella-base-en-v2” 和 “thenlper/gte-base”

- 向量存储:Simple (Llamaindex 默认), Pinecone 和 Qdrant

深入了解代码

app.py 脚本是 RAGArch 的核心,它集成了各种组件以提供一致的体验。以下是 app.py 的关键函数

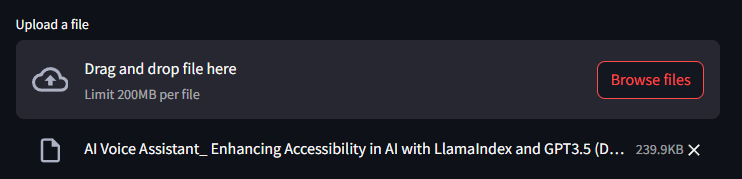

upload_file

此函数管理文件上传并使用 Llamaindex 的 SimpleDirectoryReader 将文档加载到系统中。它支持多种文档类型,包括 PDF、文本文件、HTML、JSON 文件等,使其能够灵活处理各种数据源。

def upload_file():

file = st.file_uploader("Upload a file", on_change=reset_pipeline_generated)

if file is not None:

file_path = save_uploaded_file(file)

if file_path:

loaded_file = SimpleDirectoryReader(input_files=[file_path]).load_data()

print(f"Total documents: {len(loaded_file)}")

st.success(f"File uploaded successfully. Total documents loaded: {len(loaded_file)}")

return loaded_file

return Nonesave_uploaded_file

此实用函数将上传的文件保存到服务器上的临时位置,以便后续处理。这是文件处理过程中的关键部分,可确保数据的完整性和可用性。

def save_uploaded_file(uploaded_file):

try:

with tempfile.NamedTemporaryFile(delete=False, suffix=os.path.splitext(uploaded_file.name)[1]) as tmp_file:

tmp_file.write(uploaded_file.getvalue())

return tmp_file.name

except Exception as e:

st.error(f"Error saving file: {e}")

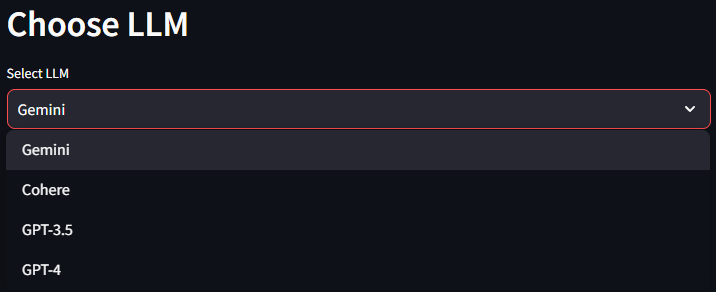

return Noneselect_llm

允许用户选择大型语言模型并初始化以供使用。您可以从 Google 的 Gemini Pro、Cohere、OpenAI 的 GPT 3.5 和 GPT 4 中选择。

def select_llm():

st.header("Choose LLM")

llm_choice = st.selectbox("Select LLM", ["Gemini", "Cohere", "GPT-3.5", "GPT-4"], on_change=reset_pipeline_generated)

if llm_choice == "GPT-3.5":

llm = OpenAI(temperature=0.1, model="gpt-3.5-turbo-1106")

st.write(f"{llm_choice} selected")

elif llm_choice == "GPT-4":

llm = OpenAI(temperature=0.1, model="gpt-4-1106-preview")

st.write(f"{llm_choice} selected")

elif llm_choice == "Gemini":

llm = Gemini(model="models/gemini-pro")

st.write(f"{llm_choice} selected")

elif llm_choice == "Cohere":

llm = Cohere(model="command", api_key=os.environ['COHERE_API_TOKEN'])

st.write(f"{llm_choice} selected")

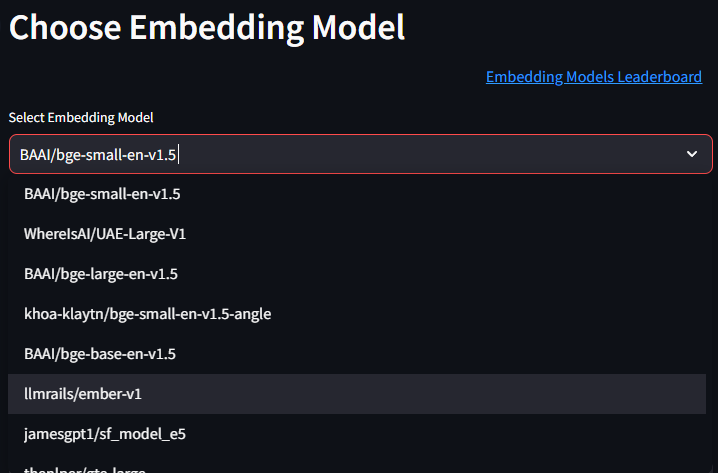

return llm, llm_choiceselect_embedding_model

提供一个下拉菜单,供用户从预定义列表中选择所需的嵌入模型。我收录了一些来自 Hugging Face 的 MTEB 排行榜上的顶级嵌入模型。在下拉菜单附近,我还包含了一个方便的链接,指向排行榜,用户可以在其中获取有关嵌入模型的更多信息。

def select_embedding_model():

st.header("Choose Embedding Model")

col1, col2 = st.columns([2,1])

with col2:

st.markdown("""

[Embedding Models Leaderboard](https://hugging-face.cn/spaces/mteb/leaderboard)

""")

model_names = [

"BAAI/bge-small-en-v1.5",

"WhereIsAI/UAE-Large-V1",

"BAAI/bge-large-en-v1.5",

"khoa-klaytn/bge-small-en-v1.5-angle",

"BAAI/bge-base-en-v1.5",

"llmrails/ember-v1",

"jamesgpt1/sf_model_e5",

"thenlper/gte-large",

"infgrad/stella-base-en-v2",

"thenlper/gte-base"

]

selected_model = st.selectbox("Select Embedding Model", model_names, on_change=reset_pipeline_generated)

with st.spinner("Please wait") as status:

embed_model = HuggingFaceEmbedding(model_name=selected_model)

st.session_state['embed_model'] = embed_model

st.markdown(F"Embedding Model: {embed_model.model_name}")

st.markdown(F"Embed Batch Size: {embed_model.embed_batch_size}")

st.markdown(F"Embed Batch Size: {embed_model.max_length}")

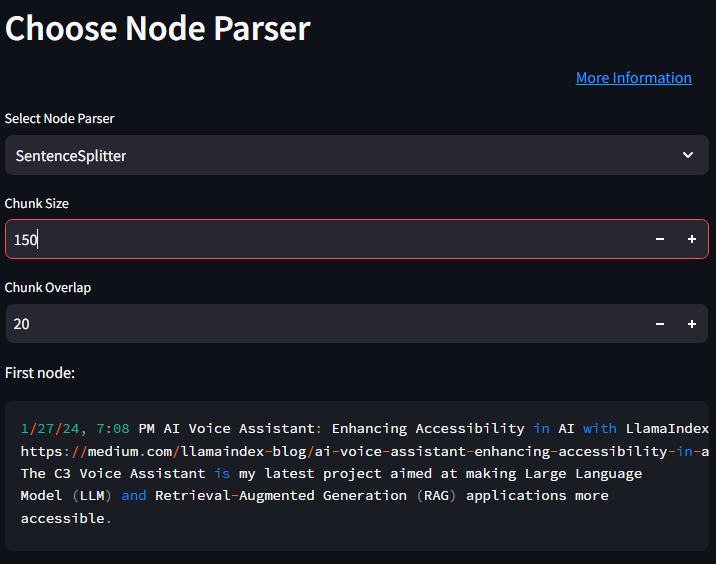

return embed_model, selected_modelselect_node_parser 函数

此函数允许用户选择节点解析器,它对于将文档分解为可管理的块或节点至关重要,有助于更好地处理和处理。我收录了一些 Llamaindex 支持的最常用的节点解析器,包括 SentenceSplitter、CodeSplitter、SemanticSplitterNodeParser、TokenTextSplitter、HTMLNodeParser、JSONNodeParser 和 MarkdownNodeParser。

def select_node_parser():

st.header("Choose Node Parser")

col1, col2 = st.columns([4,1])

with col2:

st.markdown("""

[More Information](https://docs.llamaindex.org.cn/en/stable/module_guides/loading/node_parsers/root.html)

""")

parser_types = ["SentenceSplitter", "CodeSplitter", "SemanticSplitterNodeParser",

"TokenTextSplitter", "HTMLNodeParser", "JSONNodeParser", "MarkdownNodeParser"]

parser_type = st.selectbox("Select Node Parser", parser_types, on_change=reset_pipeline_generated)

parser_params = {}

if parser_type == "HTMLNodeParser":

tags = st.text_input("Enter tags separated by commas", "p, h1")

tag_list = tags.split(',')

parser = HTMLNodeParser(tags=tag_list)

parser_params = {'tags': tag_list}

elif parser_type == "JSONNodeParser":

parser = JSONNodeParser()

elif parser_type == "MarkdownNodeParser":

parser = MarkdownNodeParser()

elif parser_type == "CodeSplitter":

language = st.text_input("Language", "python")

chunk_lines = st.number_input("Chunk Lines", min_value=1, value=40)

chunk_lines_overlap = st.number_input("Chunk Lines Overlap", min_value=0, value=15)

max_chars = st.number_input("Max Chars", min_value=1, value=1500)

parser = CodeSplitter(language=language, chunk_lines=chunk_lines, chunk_lines_overlap=chunk_lines_overlap, max_chars=max_chars)

parser_params = {'language': language, 'chunk_lines': chunk_lines, 'chunk_lines_overlap': chunk_lines_overlap, 'max_chars': max_chars}

elif parser_type == "SentenceSplitter":

chunk_size = st.number_input("Chunk Size", min_value=1, value=1024)

chunk_overlap = st.number_input("Chunk Overlap", min_value=0, value=20)

parser = SentenceSplitter(chunk_size=chunk_size, chunk_overlap=chunk_overlap)

parser_params = {'chunk_size': chunk_size, 'chunk_overlap': chunk_overlap}

elif parser_type == "SemanticSplitterNodeParser":

if 'embed_model' not in st.session_state:

st.warning("Please select an embedding model first.")

return None, None

embed_model = st.session_state['embed_model']

buffer_size = st.number_input("Buffer Size", min_value=1, value=1)

breakpoint_percentile_threshold = st.number_input("Breakpoint Percentile Threshold", min_value=0, max_value=100, value=95)

parser = SemanticSplitterNodeParser(buffer_size=buffer_size, breakpoint_percentile_threshold=breakpoint_percentile_threshold, embed_model=embed_model)

parser_params = {'buffer_size': buffer_size, 'breakpoint_percentile_threshold': breakpoint_percentile_threshold}

elif parser_type == "TokenTextSplitter":

chunk_size = st.number_input("Chunk Size", min_value=1, value=1024)

chunk_overlap = st.number_input("Chunk Overlap", min_value=0, value=20)

parser = TokenTextSplitter(chunk_size=chunk_size, chunk_overlap=chunk_overlap)

parser_params = {'chunk_size': chunk_size, 'chunk_overlap': chunk_overlap}

# Save the parser type and parameters to the session state

st.session_state['node_parser_type'] = parser_type

st.session_state['node_parser_params'] = parser_params

return parser, parser_type在节点解析器选择下方,我还包含了文本分割/解析后第一个节点的预览,以便用户了解根据所选节点解析器和相关参数实际是如何进行分块的。

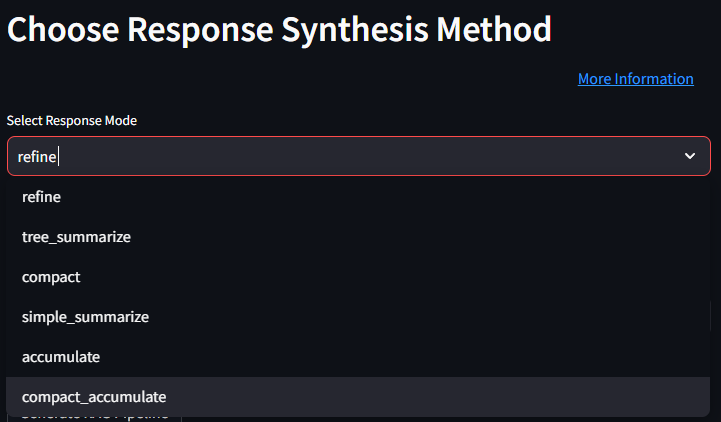

select_response_synthesis_method

此函数允许用户选择 RAG Pipeline 如何合成响应。我收录了 Llamaindex 支持的各种响应合成方法,包括 refine、tree_summarize、compact、simple_summarize、accumulate 和 compact_accumulate。

用户可以点击“更多信息”链接,了解有关响应合成和不同类型的更多详细信息。

def select_response_synthesis_method():

st.header("Choose Response Synthesis Method")

col1, col2 = st.columns([4,1])

with col2:

st.markdown("""

[More Information](https://docs.llamaindex.org.cn/en/stable/module_guides/querying/response_synthesizers/response_synthesizers.html)

""")

response_modes = [

"refine",

"tree_summarize",

"compact",

"simple_summarize",

"accumulate",

"compact_accumulate"

]

selected_mode = st.selectbox("Select Response Mode", response_modes, on_change=reset_pipeline_generated)

response_mode = selected_mode

return response_mode, selected_modeselect_vector_store

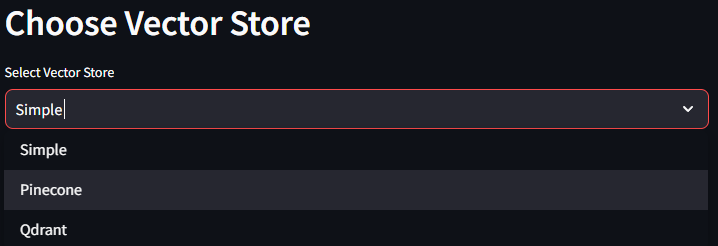

使用户能够选择向量存储,这是在 RAG Pipeline 中存储和检索嵌入的关键组件。此函数支持从多个向量存储选项中选择,包括 Simple (Llamaindex 默认)、Pinecone 和 Qdrant。

def select_vector_store():

st.header("Choose Vector Store")

vector_stores = ["Simple", "Pinecone", "Qdrant"]

selected_store = st.selectbox("Select Vector Store", vector_stores, on_change=reset_pipeline_generated)

vector_store = None

if selected_store == "Pinecone":

pc = Pinecone(api_key=os.environ['PINECONE_API_KEY'])

index = pc.Index("test")

vector_store = PineconeVectorStore(pinecone_index=index)

elif selected_store == "Qdrant":

client = qdrant_client.QdrantClient(location=":memory:")

vector_store = QdrantVectorStore(client=client, collection_name="sampledata")

st.write(selected_store)

return vector_store, selected_storegenerate_rag_pipeline 函数

此核心函数将所选组件绑定在一起以生成 RAG Pipeline。它使用所选的 LLM、嵌入模型、节点解析器、响应合成方法和向量存储来初始化 Pipeline。它通过按下“Generate RAG Pipeline”按钮触发。

def generate_rag_pipeline(file, llm, embed_model, node_parser, response_mode, vector_store):

if vector_store is not None:

# Set storage context if vector_store is not None

storage_context = StorageContext.from_defaults(vector_store=vector_store)

else:

storage_context = None

# Create the service context

service_context = ServiceContext.from_defaults(llm=llm, embed_model=embed_model, node_parser=node_parser)

# Create the vector index

vector_index = VectorStoreIndex.from_documents(documents=file, storage_context=storage_context, service_context=service_context, show_progress=True)

if storage_context:

vector_index.storage_context.persist(persist_dir="persist_dir")

# Create the query engine

query_engine = vector_index.as_query_engine(

response_mode=response_mode,

verbose=True,

)

return query_enginegenerate_code_snippet 函数

此函数是用户选择的最终结果,它生成实现已配置 RAG Pipeline 所需的 Python 代码。它根据所选的 LLM、嵌入模型、节点解析器、响应合成方法和向量存储动态构建代码片段,包括为节点解析器设置的参数。

def generate_code_snippet(llm_choice, embed_model_choice, node_parser_choice, response_mode, vector_store_choice):

node_parser_params = st.session_state.get('node_parser_params', {})

print(node_parser_params)

code_snippet = "from llama_index.llms import OpenAI, Gemini, Cohere\n"

code_snippet += "from llama_index.embeddings import HuggingFaceEmbedding\n"

code_snippet += "from llama_index import ServiceContext, VectorStoreIndex, StorageContext\n"

code_snippet += "from llama_index.node_parser import SentenceSplitter, CodeSplitter, SemanticSplitterNodeParser, TokenTextSplitter\n"

code_snippet += "from llama_index.node_parser.file import HTMLNodeParser, JSONNodeParser, MarkdownNodeParser\n"

code_snippet += "from llama_index.vector_stores import MilvusVectorStore, QdrantVectorStore\n"

code_snippet += "import qdrant_client\n\n"

# LLM initialization

if llm_choice == "GPT-3.5":

code_snippet += "llm = OpenAI(temperature=0.1, model='gpt-3.5-turbo-1106')\n"

elif llm_choice == "GPT-4":

code_snippet += "llm = OpenAI(temperature=0.1, model='gpt-4-1106-preview')\n"

elif llm_choice == "Gemini":

code_snippet += "llm = Gemini(model='models/gemini-pro')\n"

elif llm_choice == "Cohere":

code_snippet += "llm = Cohere(model='command', api_key='<YOUR_API_KEY>') # Replace <YOUR_API_KEY> with your actual API key\n"

# Embedding model initialization

code_snippet += f"embed_model = HuggingFaceEmbedding(model_name='{embed_model_choice}')\n\n"

# Node parser initialization

node_parsers = {

"SentenceSplitter": f"SentenceSplitter(chunk_size={node_parser_params.get('chunk_size', 1024)}, chunk_overlap={node_parser_params.get('chunk_overlap', 20)})",

"CodeSplitter": f"CodeSplitter(language={node_parser_params.get('language', 'python')}, chunk_lines={node_parser_params.get('chunk_lines', 40)}, chunk_lines_overlap={node_parser_params.get('chunk_lines_overlap', 15)}, max_chars={node_parser_params.get('max_chars', 1500)})",

"SemanticSplitterNodeParser": f"SemanticSplitterNodeParser(buffer_size={node_parser_params.get('buffer_size', 1)}, breakpoint_percentile_threshold={node_parser_params.get('breakpoint_percentile_threshold', 95)}, embed_model=embed_model)",

"TokenTextSplitter": f"TokenTextSplitter(chunk_size={node_parser_params.get('chunk_size', 1024)}, chunk_overlap={node_parser_params.get('chunk_overlap', 20)})",

"HTMLNodeParser": f"HTMLNodeParser(tags={node_parser_params.get('tags', ['p', 'h1'])})",

"JSONNodeParser": "JSONNodeParser()",

"MarkdownNodeParser": "MarkdownNodeParser()"

}

code_snippet += f"node_parser = {node_parsers[node_parser_choice]}\n\n"

# Response mode

code_snippet += f"response_mode = '{response_mode}'\n\n"

# Vector store initialization

if vector_store_choice == "Pinecone":

code_snippet += "pc = Pinecone(api_key=os.environ['PINECONE_API_KEY'])\n"

code_snippet += "index = pc.Index('test')\n"

code_snippet += "vector_store = PineconeVectorStore(pinecone_index=index)\n"

elif vector_store_choice == "Qdrant":

code_snippet += "client = qdrant_client.QdrantClient(location=':memory:')\n"

code_snippet += "vector_store = QdrantVectorStore(client=client, collection_name='sampledata')\n"

elif vector_store_choice == "Simple":

code_snippet += "vector_store = None # Simple in-memory vector store selected\n"

code_snippet += "\n# Finalizing the RAG pipeline setup\n"

code_snippet += "if vector_store is not None:\n"

code_snippet += " storage_context = StorageContext.from_defaults(vector_store=vector_store)\n"

code_snippet += "else:\n"

code_snippet += " storage_context = None\n\n"

code_snippet += "service_context = ServiceContext.from_defaults(llm=llm, embed_model=embed_model, node_parser=node_parser)\n\n"

code_snippet += "_file = 'path_to_your_file' # Replace with the path to your file\n"

code_snippet += "vector_index = VectorStoreIndex.from_documents(documents=_file, storage_context=storage_context, service_context=service_context, show_progress=True)\n"

code_snippet += "if storage_context:\n"

code_snippet += " vector_index.storage_context.persist(persist_dir='persist_dir')\n\n"

code_snippet += "query_engine = vector_index.as_query_engine(response_mode=response_mode, verbose=True)\n"

return code_snippet结论

RAGArch 站在创新与实用性的交汇点,提供了一种简化的无代码方法来进行 RAG Pipeline 开发。它旨在揭示 AI 配置的复杂性。借助 RAGArch,经验丰富的开发者和 AI 爱好者都可以轻松构建自定义 Pipeline,加速从想法到实现的进程。

随着我不断改进这个工具,您的见解和贡献是宝贵的。请在 Github 上查看 RAGArch,并在 Linkedin 上与我交流。我总是渴望与技术领域的探索者们合作并分享知识。